the good and bad news about prompt engineering

the most comprehensive guide on prompt engineering

You are reading a 🔒subscriber-only edition

In a very recent small vibe-coding event I attended, I noticed a striking insight on how different folks prompt AI. Now, this could totally be limited to this set of people, but I want to share it broadly and see what your thoughts are? Leave me a comment if you have noticed too.

I noticed people with backgrounds in communication, teaching, and the humanities ( non-technical roles basically) outperformed technically trained individuals, like computer science majors, in generating high-quality prompt outcomes.

so I came back home and started digging to see if there was any study done on this. While I didn’t quite find any formal study as such.

I want to share my hypothesis on why this might be in today’s newsletter, along with 5 prompting styles (which are the majority of how most operators, product managers, and product marketers use AI today)

Save This Newsletter if...

✅ You want to write clearer, faster, smarter with AI

✅ You want to reduce rewriting, over-explaining, and tool frustration

✅ You want to lead by example as an AI-native operator

I am currently building a course, “Become an AI-powered PM” for operators, marketers, and product managers who want to start working with AI but are overwhelmed by the noise.

You will walk away with everything you need to know to get started with AI for your daily workflows, join the private community, and get access to my prompt library and script library.

Will be teaching it LIVE as a 2nd cohort starting in August. Sign up for Waitlist here

Today’s Deep Dive

You can’t use AI without prompting.

Prompting is the steering wheel. Everything else is just hoping the car and knowing where you want to go.

Prompting is not about knowing how AI works at the code level. So far with ALL the knowledge OpenAI, Anthropic, Google, and similar companies have put out about Prompt Engineering (yes, I went through tons of it, I boil it down to this 👇

Prompting is about knowing how to communicate a mental model clearly to a system that only understands logic, structure, and specificity.

In this vibe-coding event, I noticed extremely talented tech-savvy folks approached prompting like code: they optimized for brevity, assumed shared context, and skipped over the human-instruction nuance. That led to vague prompts. or a lot of iteration.

“Summarize this report.”

“Draft an onboarding flow.”

This may work in some situations, but this kind of prompting led to subpar outputs. I found that a non-tech person would so many times provide better, well-structured, and detailed prompts.

Here’s what strong communicators (be it any profession) tend to bring to the table:

- Clarity of Intent: They’re trained to convey ideas precisely, with audience awareness i.e a key skill when assigning roles and context to an AI model.

- Narrative Sequencing: They know how to structure a flow: from purpose → to format → to constraints → to desired output. This scaffolding is essential for strong prompts. they do it naturally.

- Iterative Framing: They instinctively rephrase and reframe questions when misunderstood, a natural habit that aligns with effective AI iteration.

Prompt engineering sits at the intersection of logic and language. It borrows the structure of programming, but the craft comes from clear thinking, instructional design, and strategic delegation — all classic strengths of writers, product managers, marketers and communication professionals.

In fact, many leading prompt engineering courses (like those offered by AI-focused bootcamps, Notion AI workshops, or even OpenAI’s own documentation) now include lessons on roleplay, structure, and iterative refinement, none of which require technical credentials.

There’s a huge spotlight on building with AI (apps, agents, copilots)

but most people skip over the core interface skill: prompting. Prompting is how you think with AI. It’s how you design interactions, extract insight, and guide output — regardless of whether you’re a builder or not.

The hype is on the tool layer. But the leverage is in the prompt layer.

Even developers building AI apps rely on prompting (in code, APIs, RAG systems, agents, etc.).

And most users don’t need to build apps — they need to build workflows

And prompting is how they do it.

A few months ago, I was on a Zoom call with a brilliant product manager I coach. sharp thinker and great communicator, but he was visibly frustrated.

“Every time I use ChatGPT, the answers sound robotic,” he said.

“Like, I’d never send this to my manager. I end up rewriting everything myself.”

He wasn’t the first person to say that to me.

So I asked him to share one of his prompts.

he typed:

“Write a weekly stakeholder update about our (XYZ) feature delay.”

No wonder the output felt robotic.

And for the next 20 minutes, I walked him through how I prompt based on the type of task (low to medium to high stakes) I’m doing.

🍉 If I’m brainstorming, I’ll use divergent prompts that prioritize idea quantity and open exploration.

🍉 If I’m delegating a writing task, I’ll use prompts that sound like briefing a team member: role, tone, audience, structure.

🍉 If I’m iterating, I’ll stack multiple rounds of prompts, tweaking the framing based on gaps in the output.

Then we rewrote his prompt together, like this:

“You’re a senior product leader writing a weekly stakeholder update for execs. The tone should be direct but not alarming. A roadmap feature is delayed. Summarize the situation, explain why it happened, what’s being done, and reset expectations clearly but calmly. Use bullet points, keep it under 300 words.” (Voice notes in chatgpt about the project and situation)

He hit Enter

And then, his face lit up.

“Oh wow. This sounds like something I would write.”

That moment changed everything for him

And that’s when I realized: Most people don’t have a “prompting problem.”

They just provide no context to AI.

They think AI is guessing their intent. But it’s only mirroring what you give it.

How to Build Your Own Prompt Framework

Your workflow deserves more than one-off prompts. It deserves a system.

One of the most powerful shifts I made as a product leader wasn’t just using ChatGPT.

It was designing my own prompt scaffolding and reusable templates I could plug into my work without starting from scratch each time.

Here’s a simple 6-part framework I teach in my live workshops. I call it PROMPT:

🧠 Now, Build Your Own in 60 Seconds

Let’s say you want to write a spec draft with AI. Fill in the blanks:

Prompt:

🎯 Example Plugged-In Version:

🪄 Save and Stack Your Best Prompts

Whenever you get a great AI output

- Save the prompt in Notion, Google Docs, or your CRM

- Label it by use case (e.g., “Team Email,” “Meeting Summary,” “PRD Draft”)

- Tweak and version it over time — you’re building a personal AI toolkit

Over time, you’ll have your own prompt library that mirrors your voice, your workflows, and your standards.

That’s how AI becomes your second brain, not just a search engine.

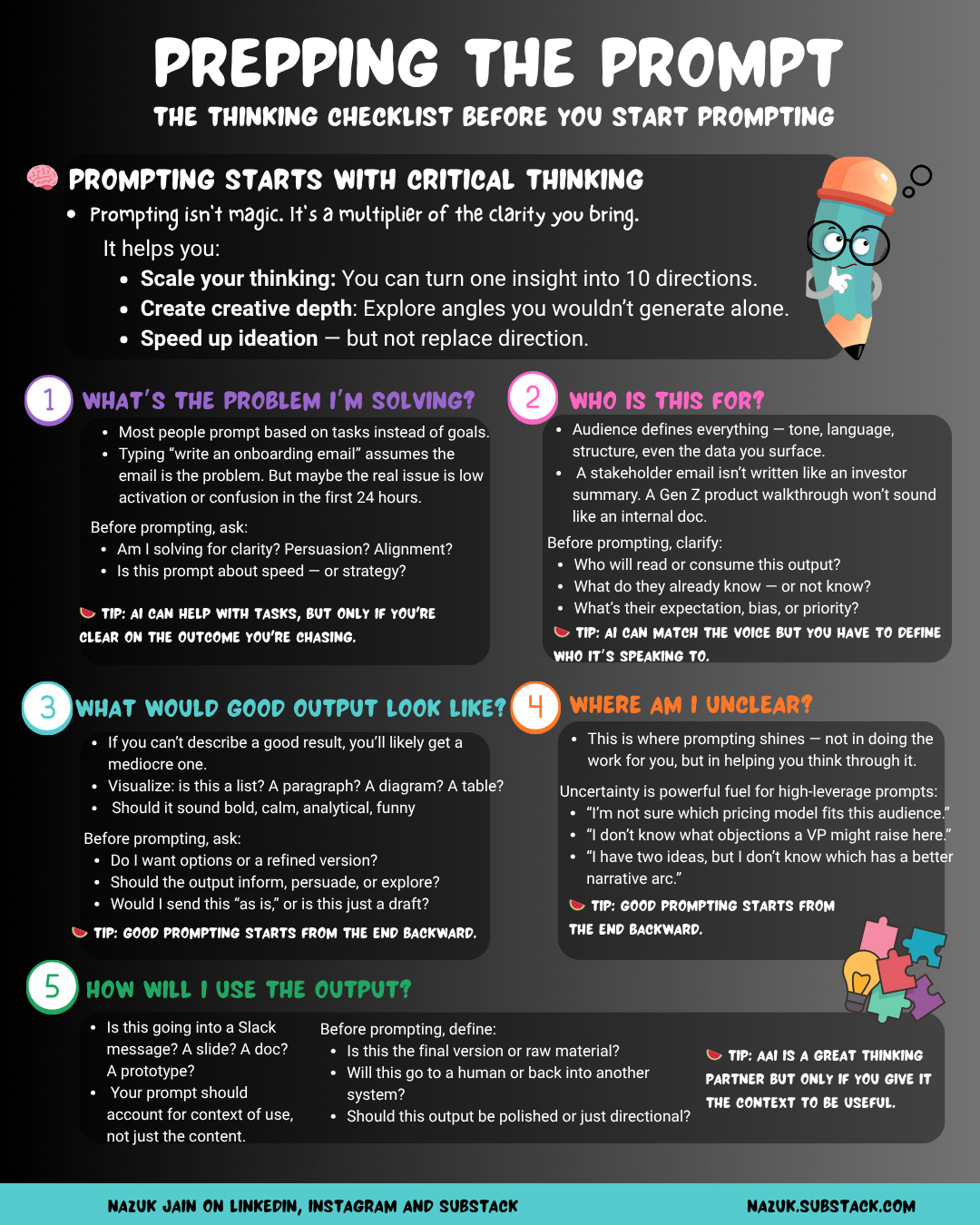

BEFORE you start prompting… here is a quick reminder

Prompting begins with critical thinking

Thanks for reading Product Career! Please feel free to share to support my work. Your share means a lot to me.

Here are 5 prompting techniques. Each technique mirrors a powerful cognitive model, and when paired with AI, it supercharges your thinking.

Run the prompts I shared below in ChatGPT, and I eagerly want to know how these work out for you.

1. 🧠 Chain of Thought Prompting (CoT)

A method where you ask the model to reason step-by-step, mirroring how humans think through logic or multi-part decisions.

This technique is powerful as it reduces hallucinations and forces the AI model to justify its output, especially useful for product tradeoffs, estimation, or roadmap prioritization.

🍉 Example Prompt